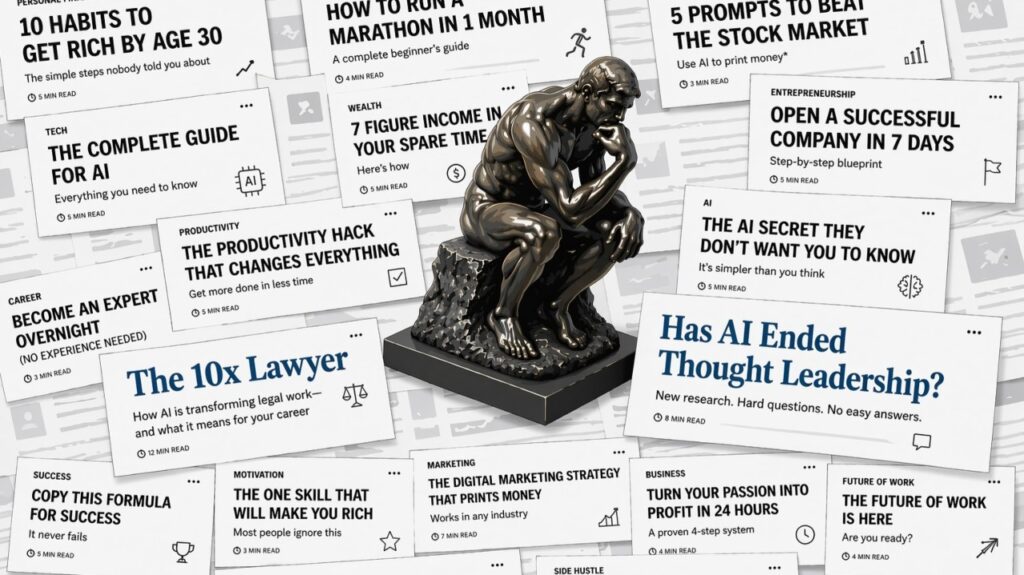

In today’s environment, choosing the right business advisory services has become increasingly difficult. We are flooded with content, opinions, and AI-generated insights that make almost anyone sound like an expert. The challenge is no longer access to information, but knowing which advice actually comes from real experience and leads to real outcomes.

Good thing we had time, as it led me to a rabbit hole, trying first to explain how to make sense of the current AI news that paints a bleak scenario for everyone coming out of the university, and at the same time, for the future of knowledge-based companies.

It is not just that we are flooded with too much content competing for our attention (and trying to keep us scrolling forever). But separating noise from signal is getting harder, not just for the consumer, but for businesses as well.

The latest HBR article “Has AI Ended Thought Leadership?” expertly summarizes it: “It has never been easier to sound like an expert, a few prompts to your preferred AI tool and you get authority-sounding articles on anything”. I might also add that with a confidence level that not even true experts dare.

“LinkedIn Top Voice” are being handed to those who are posting every two days a new article-length post. Really? Every two days? but then again, last year multiple news outlets reported that more articles are now created by AI than humans, and AI slop continues to expand across every platform we use.

But every now and then, thought-provoking articles like the 10x Lawyer by Zack Shapiro help make sense of the times we are living. So, I tried to give my advice to him by explaining Zack’s article and how we could very well divide into groups, and with that in mind, how those that we have the responsibility to lead companies are adjusting accordingly. That’s the playing field now.

The first divide

Learning things has always been hard, as you needed to research them and spend time doing it. That is why media posts like “10 habits to get rich by age 30” and “How to run a marathon in 1 month” become popular, as many preferred quick fixes or shortcuts. And suddenly, AI was here.

That is why Mark Cuban’s recent quote is too important to know where we start. He said there will be two types of AI users, those who use AI, so they don’t have to learn anything (don’t need to think). And the ones who use it, so they have the opportunity to learn everything.

A fellow CEO already has a mandate for the companies in his group. Every new hire must have previous experience using an AI platform to improve the outcome of a project. No exceptions.

The second divide

The 10x Lawyer article explains very well how AI has impacted the legal industry and, indirectly, the knowledge industry. In summary, in a high-stakes case or transaction with a short deadline, the legal firm might need to review thousands of pages of contracts, filings, precedents, correspondence, regulations, and prior case law. A single person simply could not read, analyze, and synthesize all that material fast enough while also developing the legal strategy.

So, the work was divided across layers. A small army of junior lawyers and recent graduates handled the heavy document review, legal research, and first-pass drafting. Mid-level and senior lawyers then filtered that work, identified the strongest arguments, flagged the risks, and connected the different pieces. Finally, the partners, who had the most experience, made the strategic decisions about which position to take, what arguments to emphasize, negotiate, and present the case.

This pyramid model was effective because it allowed firms to process huge volumes of complex information under tight deadlines while concentrating the most valuable judgment at the top. But now with AI, you no longer need that many eyes to read all the material to be able to get it to the partners to create the legal strategy.

So, if you no longer need 20 recent graduates but only 2, what will be considered when hiring?

Before, you used GPA, courses they took and the name of the university, as it signaled reliability and the ability to process that type of information, and because you were hiring volume, it was OK to have misses, it didn’t have major company ramifications.

But if AI is now doing most of the reading, summarizing, and first-pass drafting, then companies will look for people who can interpret nuance, demonstrate comprehension of complex materials, and see the bigger picture, as they will need to assess whether a summary is accurate and determine what truly matters in a complex situation. Don’t be surprised if, instead of traditional IQ-style tests, candidates are asked to review a dense document and write a short essay, including how it relates to their real-life experience.

It means hiring for judgment. Employees whose judgment, applied through AI, produces order-of-magnitude differences in output quality and speed.

Judgment is the cognitive process of forming an opinion, evaluation, or decision by discerning, comparing, and assessing circumstances. It involves critical thinking to reach conclusions and the capability to make wise, objective decisions (good sense/discretion).

AI is a powerful amplifier, poor judgment generates evident mistakes, as it also amplifies excellent judgment into exceptional output. Anyone who has spent the past months working with Claude has seen firsthand how the smallest carelessness or omission can ruin the expected result. And the bigger the outcome of the project, the more interacting variables one must consider.

Obviously, poor candidates will quickly be left behind (they will keep scrolling through endless reels). But it is the gap between the best people and the average that is widening. Of the 20 who were hired before, only the best 2 will be hired. The new hires will have a much bigger impact on the company because of AI tools, as will any employee already working there.

Common Sense

This is where business advisory services should stand apart, not by sounding confident, but by demonstrating real-world judgment and accountability. Judgment will be a premium, and common sense to navigate all the noise will be critical.

I have been a member of Advisory Boards for almost a decade now, across several companies. With some, I have worked closely with the CEOs in steering them. Board members will say the right buzzwords with authority. As a result, untested CEOs can wrongly prioritize their focus, since all the advice sounds important. You have to remind the CEO to keep using their common sense and to consider whether there is actual experience behind the advice (just knowing the theory doesn’t count).

Now with AI, you have an advisory board for everything. And unlike the advisory board, you can also ask for assistance with execution as well, even in the smallest tasks. And very soon, you could start using AI to not think, when what you need is to develop your judgment, to keep walking the lines.

Back in the day when I was selling ERP implementations, we spent days gathering requirements from all business areas and always asked to give us the chance to walk their manufacturing floor. In a few minutes, you could sense where wrong assumptions were made, no matter what the company assured you. Because when you have seen it often, you knew that adding that many stations in that type of line was not practical, or that they will benefit more from using best practices learned from the pharma industry than their own sector. Or that a WMS as it is, will not work without customizing some of it, preferably on the handhelds.

It is knowing not to trust the AI news on how ALL enterprise software is dead because software can be generated faster. It was never about rapidly coding the software, it was what top strategies & practices could be implemented to compete better. The business owners were purchasing new knowledge. Expert advice.

Business Advisory

When evaluating business advisory services, the problem becomes clear. AI amplifies all types of opinions, making it harder for companies to distinguish between advisors who understand the theory and those who can actually execute.

As the CEO of a company assisting tech companies in setting and running operations in Mexico, we have had cases of end users wrongly saying that a labor law was being broken on a particular topic because they personally think that we are wrong and ChatGPT confirmed to them that they were right. We had to generate a legal response explaining the laws, even though the user’s reasoning didn’t make sense business-wise (forget even the legal part), or that it didn’t matter our 40 years of experience versus someone using Google.

We are not alone in this as there are cases where AI has empowered non-experts to think they can use AI to defend their position, wasting time and resources for everyone involved, as companies need to defend against this behavior. For example, one person continued to sue using ChatGPT, even though it was wrong, while the counterparty spent $300,000 defending against an avalanche of fabricated filings in a case that had already been resolved. It countersued for $10.3 million including punitive damages.

This is not going away soon. MIT published a paper in February 2026 on the tendency of chatbots to validate a user’s expressed opinions, and trap even perfectly rational users in a feedback loop of false beliefs. Industry “fixes” were shown to be insufficient, like Strict Factuality: Even if a chatbot is forced to only tell the truth, it can still cause spiraling by “cherry-picking” only the specific truths that reinforce the user’s delusion.

So if you need business advice in a field the company doesn’t have experience yet, how do you know who the expert is?

Don’t hire someone just because they sound confident when giving a presentation. Hire someone who will actually implement the expert advice, someone who will not only provide their analysis but will also have responsibility for the results.

For example, the foreign company hiring our firm’s services to launch operations in Mexico is avoiding a costly and time-consuming learning curve. It’s not going to hire a vendor that is new to Mexico and will learn along with them, that’s counterintuitive. It’s common sense.

Conclusion

We think we are entering a phase where access to knowledge is no longer a constraint; you don’t have to spend years studying to sound competent in a field. This might not hold for long, as the rapid rise of AI-generated content is creating a dangerous feedback loop where AI systems increasingly train on content produced by other AI systems rather than original human knowledge.

Like making copies of copies, each generation could become more distorted, while the gaps are filled with authority-sounding but incorrect information. This problem is amplified by the already declining editorial article clicks, as AI answers the question first instead of the user navigating to the listed articles (ending the newspaper job of the experienced business author).

The advantage will not come from information access, but from knowing what matters. From asking better questions. From recognizing when something doesn’t make sense, even if it is presented with confidence and perfect grammar. From having the discipline to use AI as a tool to enhance thinking, not replace it.

No wonder the 10x Lawyer article mentions the word “judgment” 32 times.

Companies, when hiring, will look for that trait, and at the same time, will need to revise their own talent based on how much impact they will generate as AI amplifies the good and the bad. The companies that will benefit most from business advisory services will not be those who choose the most articulate advisors, but those who select partners with proven execution and accountability. They will need to focus on developing critical thinking at all levels of the company, which, by the way, will become more horizontal.

Company leaders will have to spend additional time screening those who can be truly trusted advisors. Those who have walked the talk, and, more than ever, have “skin in the game.” As at Everscale, we don’t only advise on the planning process but are also liable in Mexico for the nearshore operations that clients have started to run.

For individuals, especially those just starting out, don’t optimize for shortcuts. For companies evaluating business advisory services, the difference between advice and execution will ultimately define the outcome.

Don’t use AI to avoid the hard work of learning. Use it to accelerate your understanding and test your reasoning in real-world scenarios, to refine your judgment. Because in a world where AI can do the work of many, your value will come from how well you direct it.

The people who will stand out will not only sound like experts, but will be the ones who have developed the gut feeling to know when something is off. They will infer what caused it or who, and will implement what needs to be done using the newest tool. That’s who companies will hire, either as new hires or as business advisors.

Sources

1. Winsor John, “Has AI Ended Thought Leadership?”. Harvard Business Review. https://hbr.org/2026/03/has-ai-ended-thought-leadership

2. Paredes, J.L., Smith, E., Druck, G., & Benson, B. “More Articles Are Now Created by AI Than Humans.” Graphite.io. https://graphite.io/five-percent/more-articles-are-now-created-by-ai-than-humans

3. Ulea, A. “2025 Was the Year AI Slop Went Mainstream. Is the Internet Ready to Grow Up Now?” Euronews, December 28, 2025. https://www.euronews.com/next/2025/12/28/2025-was-the-year-ai-slop-went-mainstream-is-the-internet-ready-to-grow-up-now

4. Shapiro, Z. “The 10x Lawyer.” LinkedIn Pulse, March 11, 2026. https://www.linkedin.com/pulse/10x-lawyer-zack-shapiro-xov0e/

5. “Mark Cuban on 2 Types of AI Users”, Business Insider, February 2026. https://www.businessinsider.com/mark-cuban-two-types-of-people-using-ai-learning-lazy-2026-2

6. Majic Predin, J. “Thoma Bravo Says Public Markets Have Software Wrong, and Is Positioning to Buy.” Forbes, March 25, 2026. https://www.forbes.com/sites/josipamajic/2026/03/25/thoma-bravo-says-public-markets-have-software-wrong-and-is-positioning-to-buy/

7. Shapiro, Z. “The Input Layer.” LinkedIn Pulse, March 25, 2026. https://www.linkedin.com/pulse/input-layer-zack-shapiro-nuyte/

8. Chandra, K., Kleiman-Weiner, M., Ragan-Kelley, J., & Tenenbaum, J.B. “Sycophantic Chatbots Cause Delusional Spiraling, Even in Ideal Bayesians.” arXiv:2602.19141 [cs.AI], February 22, 2026. https://arxiv.org/abs/2602.19141

9. Jay, A. “The Ouroboros: When AI Models Eat Their Own Tail.” Digitalisation World, December 21, 2025. https://digitalisationworld.com/blogs/58657/the-ouroboros-when-ai-models-eat-their-own-tail

10. Koe, D. “I’m begging you to write more essays”, April 02, 2026. https://letters.thedankoe.com/p/im-begging-you-to-write-more-essays

11. Dorsey, J. & Botha, R. “From Hierarchy to Intelligence.” Block, 2026. https://block.xyz/inside/from-hierarchy-to-intelligence